The Art of AI: Jamming On The Digital Evolution

I spent 18 years working in Broadcast media, a career grounded in the discipline of traditional media, where every frame and every word was built from first principles by teams of skilled professionals. But my training began long before I entered a broadcast suite. It started in a practice room on Saturday mornings with my drum teacher, Dan Bodanis. There was a standard to meet, a tribe of peers to respect, and an unspoken rule: you showed up prepared.

Today, my practice room looks different. As is my creative outlet.

Over the past two years, it has taken the form of building my YouTube channel, DynastyVinyl, alongside a new class of collaborators: generative AI systems like Gemini… and one I’ve nicknamed Lemmy (yes, that Lemmy). What began as experimentation quickly became integration.

Over the past two years, it has taken the form of building my YouTube channel, DynastyVinyl, alongside a new class of collaborators: generative AI systems like Gemini… and one I’ve nicknamed Lemmy (yes, that Lemmy). What began as experimentation quickly became integration.

This transition from manual creation to AI collaboration isn’t about cutting corners. It’s an adaptive response to an industry already being reshaped in real time.

In the age of generative media, the true “Art of AI” isn’t found in pressing a Generate button. It lives in the space between control, and jamming on ideas with your AI collaborator.

Much like a musician within an ensemble, meaningful results come from listening to, interpreting, and complementing the exchange. The modern creator must learn to navigate the tension between AI’s creative autonomy and their own instinct to lead the narrative.

Success belongs to those who remain open yet decisive. Flexible enough to pivot when the machine reveals unexpected value, disciplined enough to hold course when human vision must prevail, and skilled enough to know when traditional methods out weight an AI generated response.

It is a process rooted in determination, consistency, and, above all, the resilience to evolve without surrendering authorship.

The High Cost of the Digital Apprenticeship

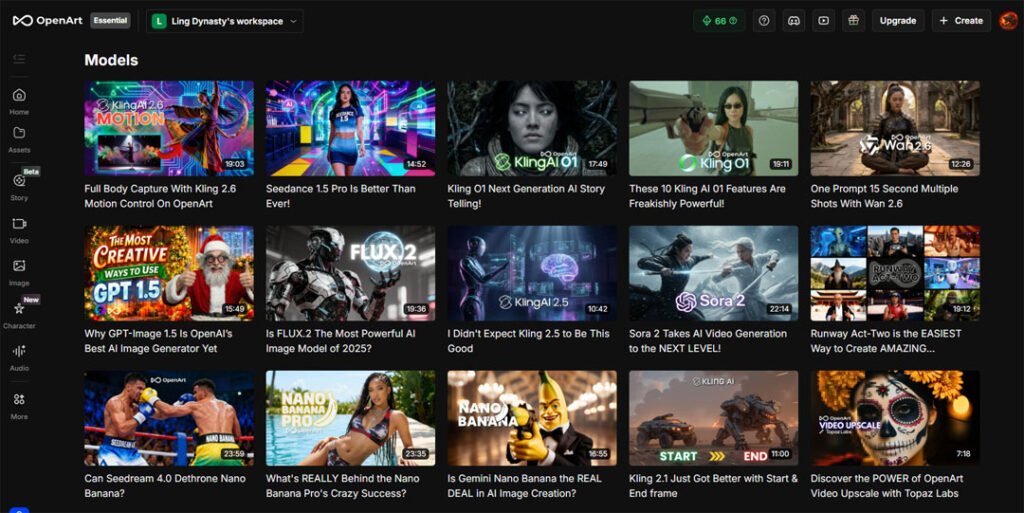

Generative AI is rapidly reshaping the modern content landscape. Not as a passing trend, but as a foundational shift in how ideas move from concept to screen. I spent the better part of a day surfing the terrain… studying the platforms that creators are gravitating toward — systems like OpenArt, Luma AI, Runway, Google’s Veo, and Pika. It’s a vast and evolving ecosystem, each platform offering its own creative philosophy, technical strengths, and subscription pathways depending on whether you’re an independent creator or operating at studio scale.

Based on my early research, and admittedly, a bit of instinct. Okay, eagerness. I chose to begin with OpenArt.

What drew me in was the breadth of model selection and the sense that the learning curve paralleled tools like Luma or Google’s generative systems. Familiar enough to onboard quickly… but deep enough to reward experimentation.

What drew me in was the breadth of model selection and the sense that the learning curve paralleled tools like Luma or Google’s generative systems. Familiar enough to onboard quickly… but deep enough to reward experimentation.

And in practice, it proved even more intuitive than I expected. So intuitive, in fact, that I moved through my monthly credits faster than anticipated. Not out of waste… but out of creative momentum. Exploration has a cost, especially when you’re testing the outer edges of what a tool can do.

When I first opened OpenArt AI, I viewed it as a tool. I was wrong. It is an apprenticeship, and the tuition is steep. All packages are based on an annual subscription. I went with the $7/month package (plus tax, that’s $90 for the year), thinking for my needs as a single content creator with one long form post per month, 4,000 monthly credits should be plenty. Not even close!

I burned through all 4000 credits in 48 hours. I wasn’t just “generating”; I was learning to speak a new language. I had to learn the “credit burn” the hard way. Image vs video, 720p vs 1080p, optimize the prompt, sound vs no sound. Every action costs credits. What I came to realize is that a subscription doesn’t buy you results. It buys you the right to fail until you find the rhythm. When my credits ran out, I ran into one of the few structural limitations within OpenArt.

At the moment, there isn’t a flexible top-up option. Upgrading requires committing to a full annual subscription. An investment that, by design, locks you into their ecosystem for the duration. Now, there’s a discipline benefit to that model. It forces you to slow down… to learn the platform more deeply… to extract its full creative range rather than hopping endlessly between tools, and perhaps leaving a trail of rotten reviews in the process.

But practically speaking, it also means timing matters. For me, that timing pushes my next window of experimentation to March. A short pause, but a noticeable one, especially in a landscape evolving as quickly as generative media. And that’s the part that stings a little. Because the platform itself is remarkable. The model variety, the visual fidelity, the creative ceiling! It’s all there waiting to be explored!

So while I’m temporarily on the sidelines with OpenArt AI, the curiosity hasn’t dimmed. If anything, it’s building momentum. I’m looking forward to stepping back in… and seeing just how far I can push it next. With that reality in front of me, I started looking at adjacent platforms. Systems like Luma AI and Runway. Both offering monthly subscription models that better aligned with my current financial bandwidth. I ultimately landed on Luma.Not because it replaced OpenArt creatively, but because the pricing structure, model access, and familiar UI made the transition feel less like a reset, and more like a continuation.

I don’t view the shift as a technical setback. If anything, it was a necessary rhythm change. The project in front of me still had momentum. The strategy behind it still felt game-changing. And when that alignment happens, when instinct, timing, and technology all meet, you don’t stall out over tooling logistics. You adapt so the idea can keep breathing. Because in this landscape, you’re not simply “buying” content generation. You’re investing in the stamina required to stay in the arena long enough for the output to finally match what you heard in your head from the start. And for me, stepping sideways to keep moving forward was the only call to make.

K.d Lang Failed Ai text to image

Model: OpenArt Photorealistic,

Output : Widescreen 16:9,

Number of images 4,

Credits spent: 120,

Prompt: A cinematic, over-the-shoulder shot from behind k.d. lang in 1991, performing on 'The Tonight Show' stage. She is wearing a perfectly tailored, sharp-shouldered dark grey pinstripe suit with her signature short-cropped hair. She leans into a vintage chrome ribbon microphone, her posture full of passion as she sings. The background shows the classic 1990s late-night talk show studio audience in tiered seating. High-contrast studio lighting, 35mm film grain, nostalgic 90s television aesthetic, elegant and powerful atmosphere.

Generative AI - Directing Realism and the "Johnny Carson" Rule

The first lesson in this new creative era revealed itself through access. Platforms. Credit systems. Model ecosystems. Subscription structures. Understanding where, and how to create, was the entry point. It was the digital equivalent of walking into a studio for the first time and learning where the instruments were kept. But access alone doesn’t produce resonance. Tools don’t create realism. Proximity to technology doesn’t guarantee authorship, and the moment your work begins engaging with cultural history/icons, with real faces, real legacies, real emotional memory, the margin for error narrows dramatically (IMO). To use drumming as an analogy, there’s only one way to play “Tom Sawyer” by Rush or “Rock n Roll” by Led Zeppelin. And that realization introduced what I’ve come to call…”The Johnny Carson” Rule. When a story intersects with real-world figures — especially cultural fixtures like Johnny Carson, there’s no latitude for creative drift.

AI loves to embellish. It fills gaps. It improvises.

But when legacy is involved, improvisation becomes what musicians like to call “playing busy.” Actually, they use another word, but this is a family program. It’s suggests playing for him/herself and not listening. On a platform such as YouTube, your every action is being micoro-critiqued. Concept and story be damned! If your interpretation deviates a fraction away from another’s perception, they let you know by surfing away, leaving a comment. Or both!

In this space, realism isn’t aesthetic preference. It’s narrative currency. Achieving it requires stepping into the mindset of a director, and not a spectator waiting to be impressed by a render. While producing my latest DynastyVinyl episode, “Pullin’ Back the Reins: The Night Johnny Carson Cried,” I learned quickly that ultra-realistic scene building isn’t about spectacle. It’s about patience. The key, I believe, is to design moments in fragments. Five seconds at a time. I map the camera language. I try to provide the blocking, and I instruct the generative AI model to operate inside the guardrails of reality rather than roam freely through stylistic interpretation.

In this space, realism isn’t aesthetic preference. It’s narrative currency. Achieving it requires stepping into the mindset of a director, and not a spectator waiting to be impressed by a render. While producing my latest DynastyVinyl episode, “Pullin’ Back the Reins: The Night Johnny Carson Cried,” I learned quickly that ultra-realistic scene building isn’t about spectacle. It’s about patience. The key, I believe, is to design moments in fragments. Five seconds at a time. I map the camera language. I try to provide the blocking, and I instruct the generative AI model to operate inside the guardrails of reality rather than roam freely through stylistic interpretation.

It’s meticulous and tedious, not to mention I run the risk of over promoting/over restricting the Ai models create freedom. That discipline is necessary. It ensures the technology remains in service to the story, preventing emotionally grounded narratives from getting buried beneath the clutter of over-produced AI noise.

What also emerged through the process was a structural prompting method that consistently delivered grounded results:

Who, What, Where, When.

Lock the subject, Define the action, Anchor the environment, Establish a time.

That four-point framework became essential when working in text-to-image systems like Nano Banana Pro, where character fidelity and historical accuracy had to be resolved before any motion work could begin. I had to learn this the hard way.

If the still frame isn’t believable, the moving frame never will be. Fine-tuning character likeness, wardrobe, lighting, and camera perspective at the image stage isn’t optional. Think of it as foundational. Skip that step, and you burn more credits chasing corrections downstream. The same principle applied to output settings. Selecting the highest render quality does cost more upfront. However, in practice, it prevents the far greater expense of iterative guesswork. There are no real shortcuts here. Only deferred costs.

As the pipeline moved from image to motion, certain models distinguished themselves in their ability to preserve realism under movement constraints, particularly Wan 2.6, Flux Pro, and Kling. Each demonstrated strength in maintaining facial integrity, broadcast lighting logic, and restrained camera motion. All critical when reconstructing historically recognizable figures and moments. Because ultimately, the “Johnny Carson Rule” is simple:

When the subject is real, the responsibility to reality doubles. The director’s job isn’t to let AI perform here. It’s to make sure it remembers the conversation.

The Tribe and the Algorithm

This level of dedication takes me back to Saturday morning drum lessons. I didn’t practice because I wanted fame. I practiced because I wanted the respect of the tribe in that room. The nod from the players who’d put in the hours. The ones who could hear whether you had or hadn’t.

Today, my tribe lives here.

A dedicated 500 subscribers. In the vast sprawl of YouTube, 500 might read as small. But I can tell you firsthand, it’s not small. It’s earned. It’s 500 individuals who chose to give me their time, their attention, their trust. That’s a foundation, not a metric.

Dan used to say, “it takes 21 days to make something a habit. If you love something, do it for 21 days and make it your habit.” My goal for 2026 is to cross the 1,000-subscriber threshold, doubling our growth in half the time. And I say “our” intentionally, because that trajectory isn’t built on luck, and it’s not crafted alone. It’s built on habits. The habits forged over the last 700 days of showing up, refining, and listening. Taking notes from my peers, and collaborating with Lemmy, my AI partner.

I’m fiercely protective of my tribe. That’s why I’m not linking the latest episode of DynastyVinyl here. I can assure you, it’s not out of secrecy. I’m absolutely thrilled with the results. It’s out of respect for the ecosystem we’ve built. I want the dedicated to find the work the way they always have. Organically. Intentionally. Because a 30-second click from a casual passerby (you) doesn’t build momentum. It disrupts it.

I’m fiercely protective of my tribe. That’s why I’m not linking the latest episode of DynastyVinyl here. I can assure you, it’s not out of secrecy. I’m absolutely thrilled with the results. It’s out of respect for the ecosystem we’ve built. I want the dedicated to find the work the way they always have. Organically. Intentionally. Because a 30-second click from a casual passerby (you) doesn’t build momentum. It disrupts it.

To follow a creator is to give them your time. And in this space, attention isn’t casual currency. It’s the most valuable donation you can offer.

The Van – Image to Video

In this sequence, I leaned on two original photos of the van I drove across Canada with a band I toured with back in 1999. Bringing these images to life was re-earthing distant memories of one of the most exciting times in my life. And, what struck me was how effectively generative AI handled the restraint of those frames. A simple, grounded composition rendered back with an almost archival level of detail. Granted, the van looks in better shape than I remember. And, I can safely say, there were no street lamps on the prairie leg of the tour. Something AI added without direction. However, it reaffirmed something I’m learning quickly: when you give these systems realism to begin with… they tend to honor it. The results impressed me enough that it sparked a working title for a future DynastyVinyl episode;

“That Time I Met David Bowie… When I Still Had a Full Head of Luscious Hair — Based on a True Story.”

Too long?

Luma AI, Model; Wan 206, Version 2.6, Resolution: 1080p, Duration: 10s, Total credits: 1500

Prompt: The white van continues driving forward along the icy highway toward the distant mountains. Dense, swirling snow and wind gust across the road from left to right, partially obscuring the trees. The van’s taillights glow through the haze. High-velocity snow particles and realistic atmospheric fog create a sense of a heavy winter storm. Cinematic, low-visibility tracking shot with natural, heavy motion.

Conclusion

My journey from the drum kit… to broadcast media… and now into the digital frontier of DynastyVinyl , it’s taught me something enduring: The tools change. The requirements never do. Discipline still matters. Preparation still matters. Respect for the craft — and for the audience — still matters.

What I’ve come to understand is that the “Art of AI” isn’t a detour around hard work… it’s simply a new way of jamming with; A new ensemble, a new soundstage, and a new set of instruments that still demand direction, taste, and restraint.

Through the credit burns of this digital apprenticeship… through the frame-by-frame directorial control required to achieve realism… through the strategic respect paid to the rhythms of the YouTube algorithm… one truth keeps surfacing:

Resilience is still the currency.

I approach my AI collaborators with a “Yes, and…” mindset — the same way a musician listens to the other players on that stage. They are partners in the session, and this is a conversation.

The drive to create — that impulse — didn’t arrive with technology. It’s been there my whole life. It’s the same force that pushed me into practice rooms for 4-6hr sessions, and now into this evolving digital arena (12-14 hr sessions).

And while the road to 1,000 subscribers stretches ahead like prepping for a cross Canada tour…

I’m consistent.

And I’m ready for the next Saturday morning lesson.